“The king is dead”—Claude 3 surpasses GPT-4 on Chatbot Arena for the first time

On Tuesday, Anthropic's Cloud 3 Opus large language model (LLM) bested OpenAI's GPT-4 (which powers ChatGPT) for the first time on Chatbot Arena, an AI platform for measuring the relative capabilities of AI language models. A popular crowdsourced leaderboard used by researchers. “The king is dead,” software developer Nick Dobos tweeted in a post comparing GPT-4 Turbo and Cloud 3 Opus that is going viral on social media. “RIP GPT-4.”

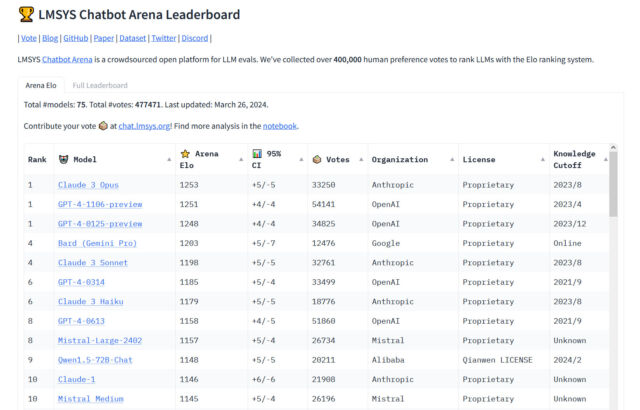

Since GPT-4 was included in the Chatbot Arena around May 10, 2023 (the leaderboard was launched on May 3 that year), variations of GPT-4 have consistently been at the top of the charts so far, hence its popularity in the Arena. The debacle is a notable moment in the relatively short history of AI language models. Haiku, one of Anthropic's smaller models, has also been turning heads with its performance on the leaderboard.

“For the first time, the best available models—Opus for advanced tasks, Haiku for cost and efficiency—are from a vendor that is not OpenAI,” independent AI researcher Simon Willison told Ars Technica. “That's reassuring – we all benefit from the diversity of the top vendors in the field. But GPT-4 is over a year old at this point, and it took that year for anyone else to catch up.”

benj edwards

Chatbot Arena is run by the Large Model Systems Organization (LMSYS.org), a research organization dedicated to open models that operates as a collaboration between students and faculty at the University of California, Berkeley, UC San Diego, and Carnegie Mellon University. Is.

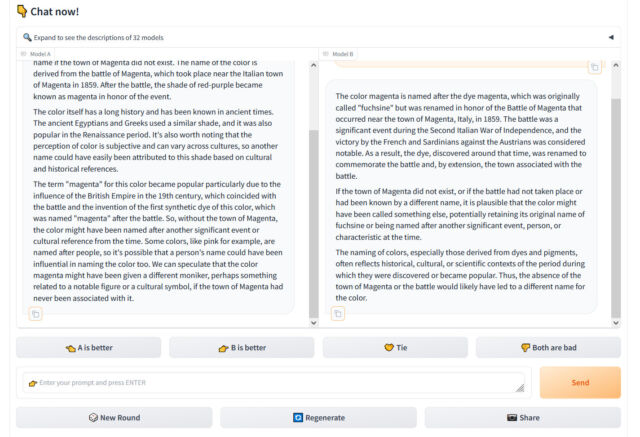

We profiled how the site works in December, but in short, the chatbot Arena presents a user visiting the website with a chat input box and two windows showing output from two unlabeled LLMs. The user's task is to determine which output is better based on whatever criteria the user deems most appropriate. Through thousands of these subjective comparisons, Chatbot Arena calculates the “best” model overall and populates the leaderboard, updating it over time.

chatbot is arena important for researchers Because they are often frustrated trying to measure the performance of AI chatbots, whose wildly varying outputs are difficult to measure. In fact, we wrote about how difficult it is to objectively benchmark LLM in our news piece about the launch of Cloud3. For that story, Willison emphasized the important role of “vibes” or subjective feelings in determining quality. LLM. “Another case of 'vibes' as a key concept in modern AI,” he said.

benj edwards

The “vibes” sentiment is common in the AI field, where numerical benchmarks measuring knowledge or test-taking ability are often chosen by vendors to make their results appear more favorable. “Just had a long coding session with Cloud3 Opus and man it absolutely crushes GPT-4. I don't think standard benchmarks do this model justice,” AI software developer Anton Bakaj tweeted on March 19. “

The rise of the cloud may give OpenAI pause, but as Willison noted, the GPT-4 family itself (though updated several times) is over a year old. Currently, Arena lists four different versions of GPT-4, which represent incremental updates to the LLM that become stable over time as each has a unique output style, and are compatible with OpenAI's API. Some developers who use them require stability so that their apps built on top of GPT-4's outputs do not break.

These include GPT-4-0314 (the “original” version of GPT-4 from March 2023), GPT-4-0613 (a snapshot of GPT-4 from June 13, 2023, with “improved function calling support”, OpenAI ), GPT-4-1106-Preview (a launch version of GPT-4 Turbo from November 2023), and GPT-4-0125-Preview (the latest GPT-4 Turbo model, which aims to reduce cases of “laziness”. January 2024).

Still, with four GPT-4 models on the leaderboard, Anthropic's Cloud 3 models have been steadily climbing the charts since their release earlier this month. The success of Cloud3 among AI assistant users has already led some LLM users to replace ChatGPT in their daily workflow, potentially eating away at ChatGPT's market share. On

Google's equally capable Gemini Advanced is also gaining popularity in the AI assistant field. This may cause OpenAI to remain cautious for now, but in the long run, the company is building new models. It is expected that a major new successor to GPT-4 Turbo (whether named GPT-4.5 or GPT-5) will be released, possibly in the summer of this year. It's clear that the LLM arena will be full of competition at the moment, which could lead to more interesting changes on the chatbot arena leaderboard in the coming months and years.